Genetic algorithms are "intelligent" algorithms. They can evolve data in a similar way as our genetic code evolves in nature.

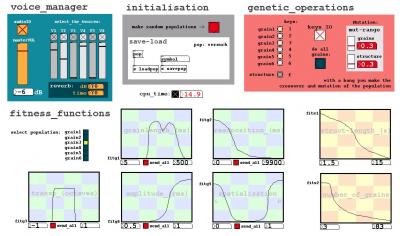

In this improvisation environment there's a performer, who interacts with the computer (= the genetic algorithm) and controls the composition. In that "communication system" the computer and the human are equal partners and operate with each other.

As sound synthesis GenGran is using granular synthesis.

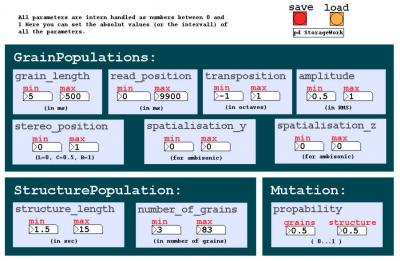

Each grain is like a gene of a human. It has specific informations, which determines the sound, length, etc. of the grain (see picture). The sum of this grains is a population (= an individual sound stream).

In GenGran there are six parallel grain populations and one structure population.

The grain populations evolve parallel and have access to different samples. So each population can have it's individual sound character.

The structure population can also be modified via a genetic algorithm and triggers the overall structure of the improvisation.

I made two versions of GenGran: GenGran-stereo and GenGran-multichannel.

In GenGran-multichannel an additional dimension gets into the improvisation: the spatialisation of the grains in the room. This can be realized with a variable number of speakers (2-24) with an experimental positioning of them in the room - so I can react on the specific acoustic situation of the performance place.

My interest in GenGran is this special form of interactivity: Not only the performer determines the evolving composition - he is also influenced by the genetic algorithm and vice versa !

GenGran is realized with the open source software PD and with some stuff in C.

duration: from min. 10 to max. 45 minutes